Hello, thanks for having me. Here's a URL where you can find the notes and slides for my talk. I'll be touching on a lot of things and I'm aware they won't all be useful or relevant to everyone. If you want to learn more about a specific thing, you'll find a link on the list.

I'm Jessamyn West and I've been a technology educator in library settings for 25 years. I tend to talk about what I know which means not a lot of Tiktok in this presentation though I have been learning how to use some of their tools. My vision is good but not improving, my hearing is okay but I have some auditory issues, my fine motor skills are pretty good. I manage a lot of anxiety. I'm of the opinion that there's a place for people of all levels of ability in accessibility work but I'm more of an accessibility advocate in library settings.

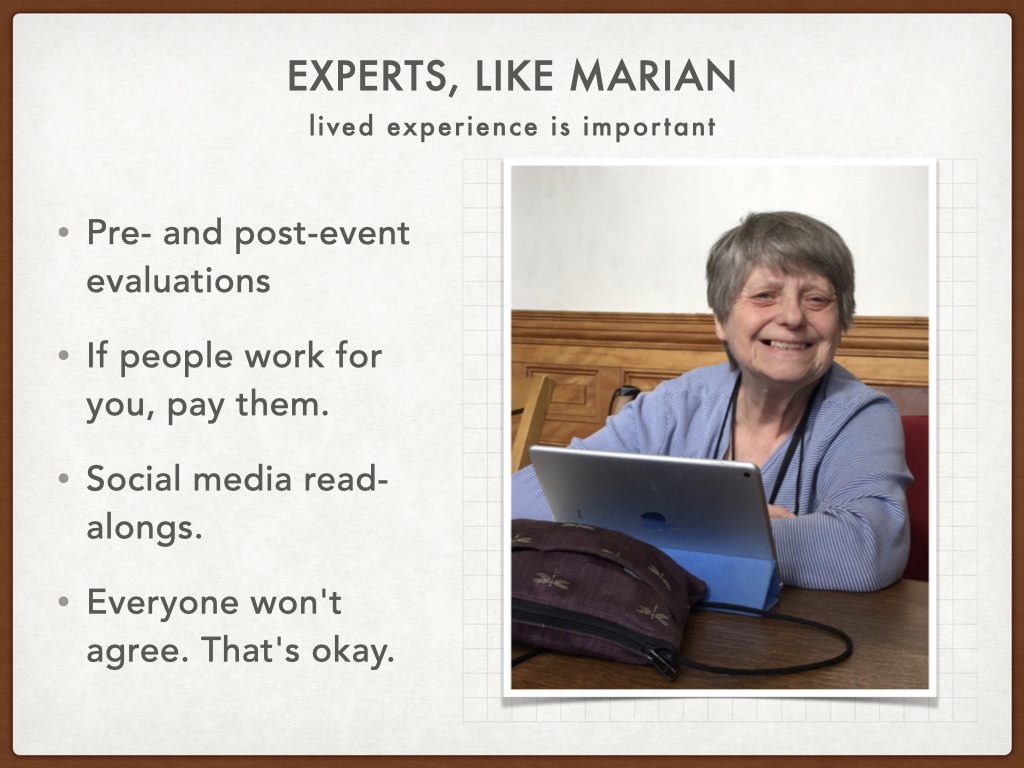

This is my friend Marian. She is deaf, or deaf enough to need two powerful hearing aids. She is retired but is spending her retirement time harassing people to make things more accessible for hearing impaired people. She's good company. It's really important to listen to the communities of people who you are trying to improve access for. They won't always agree on what to do, but they can give you feedback on how they experience things. And if they're helping you do your work, find a way to compensate them for their time and efforts.

"Nothing about us without us" is a political slogan that the disability rights movement used around the time the ADA was being discussed, debated and finally passed in 1990. 1990!! If you haven't yet made inroads with disability communities within your community, you can also acquaint yourself with communities online. Dominick and his FilmDis discussions are one way I pay attention to representations of disabled people in mainstream media. Recommended. This is one example, there are many. I also follow Rikki and James on YouTube as well as The Tommy Edison experience. (links on the page)

A few words about the social model of disability.

The old model of disability was, briefly put: disabled people had medical problems, so these problems would have medical solutions so that people with disabilities could "fit in" or otherwise access a world that was built primarily for able-bodied people, ergo the disabled person is the problem to be solved. The social model identifies society as the cause of "disability" including attitudes of people, physical barriers made by people, and information/communication barriers. People with impairments (visual, cognitive, auditory, others) become disabled by society. I'll be talking about communication barriers but it's worth understanding them as part of a larger issue of inclusion.

Looking at design and access issues as helping wide swaths of people can sometimes help with buy-in. Curb cuts built with wheelchair users in mind can also be helpful for people with baby strollers, or difficulty navigating steps. Similarly captions help lots of kinds of people. How do you know captions are taking off? When you see marketers using them in their Instagram ads/reels. People are using social media with sound off but you still want to sell them things.

So today's all about video so I'm going to talk about accessibility and some tools for captioning and subtitling.

![Title: Captions vs Subtitles. Image of a man playing guitar with the caption 'singing like' and then in brackets [music]. Bulleted list: Captions (open or closed) assume the viewers can't hear. They are a transcription, with descriptive text. Subtitles assume the viewer doesn't speak the language. They are a translation.](images/images.009.jpg)

One vocabulary note... (this is my friend Phil playing guitar and you can see the caption where he's just playing music). Tools that do EACH of these things can be helpful but the words are not really interchangeable in all circumstances.

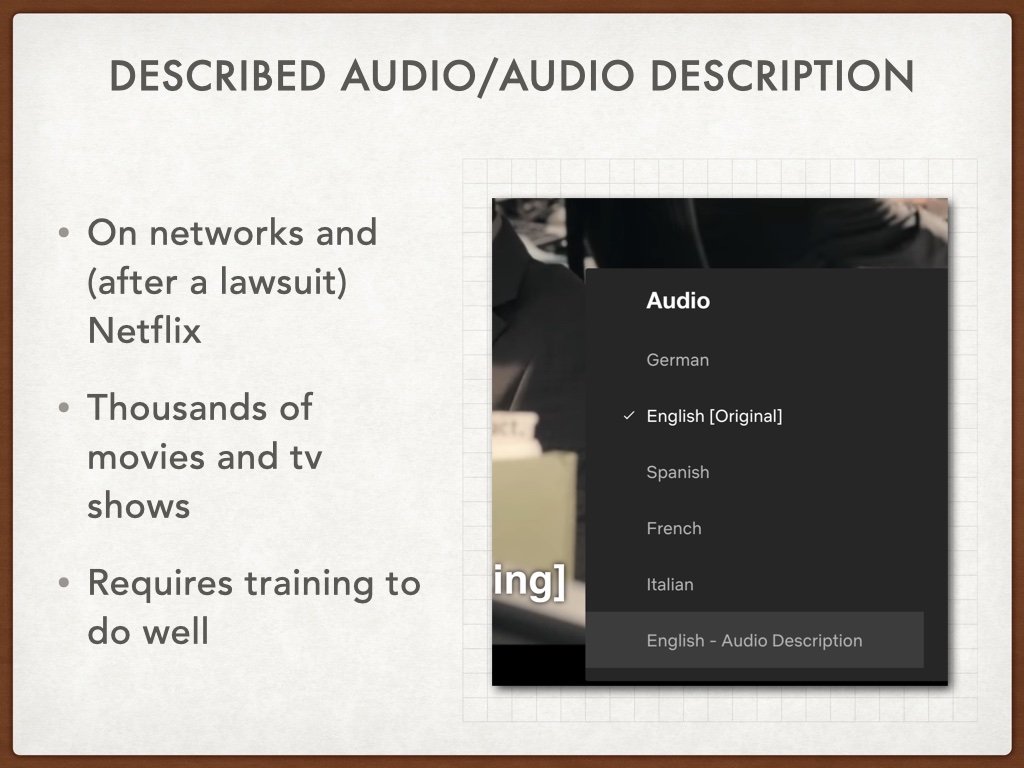

First off, I want to mention described audio which is a secondary track added to visual material that tells what is happening on screen, movements, facial expressions, change of scenery. (anecdote about watching Olympics) There is a lot of online content with described audio tracks. This is not quite the same as Verbal Description which might accompany an art exhibit or other visual non-motion media.

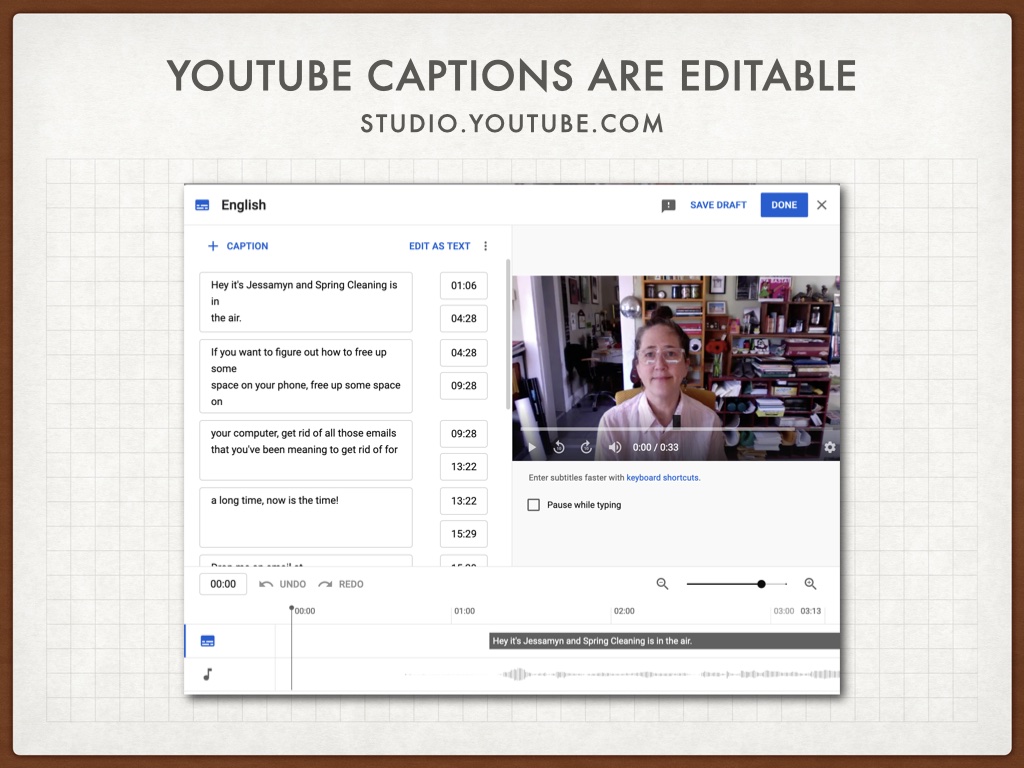

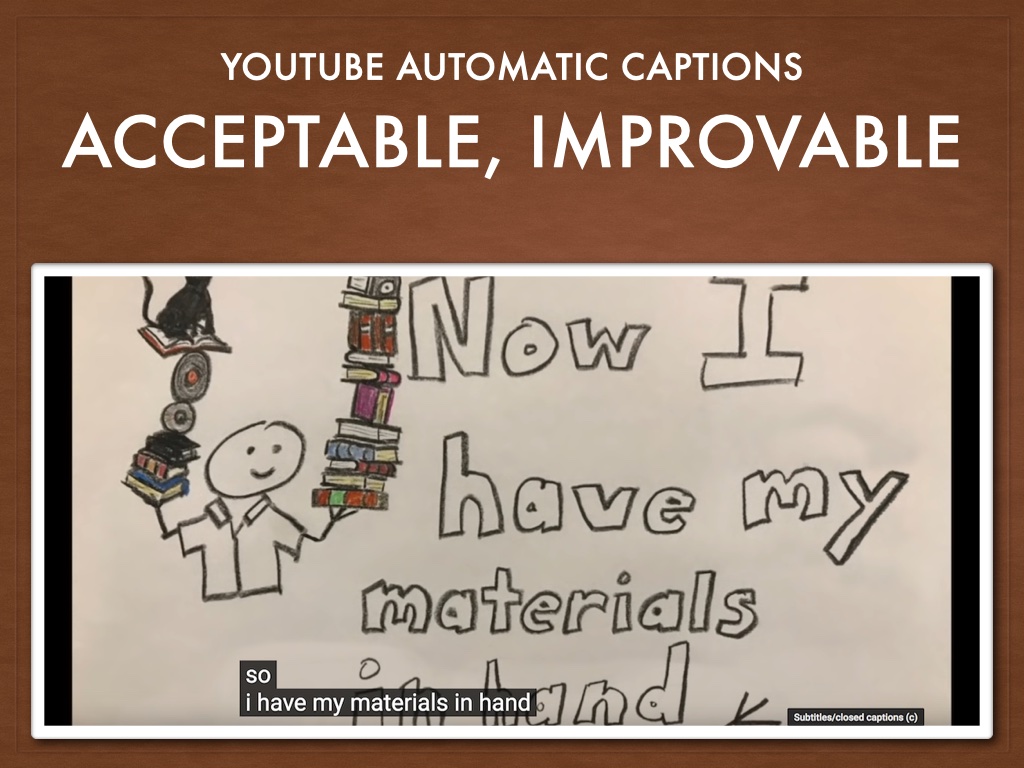

In terms of captions, YouTube can auto-generate captions which are of decent quality, but then you can go in and make edits, correct spellings and other parts of the transcript. This is good because, for example, YouTube's caption generator never knows how to spell my name even though it's right there in my username.

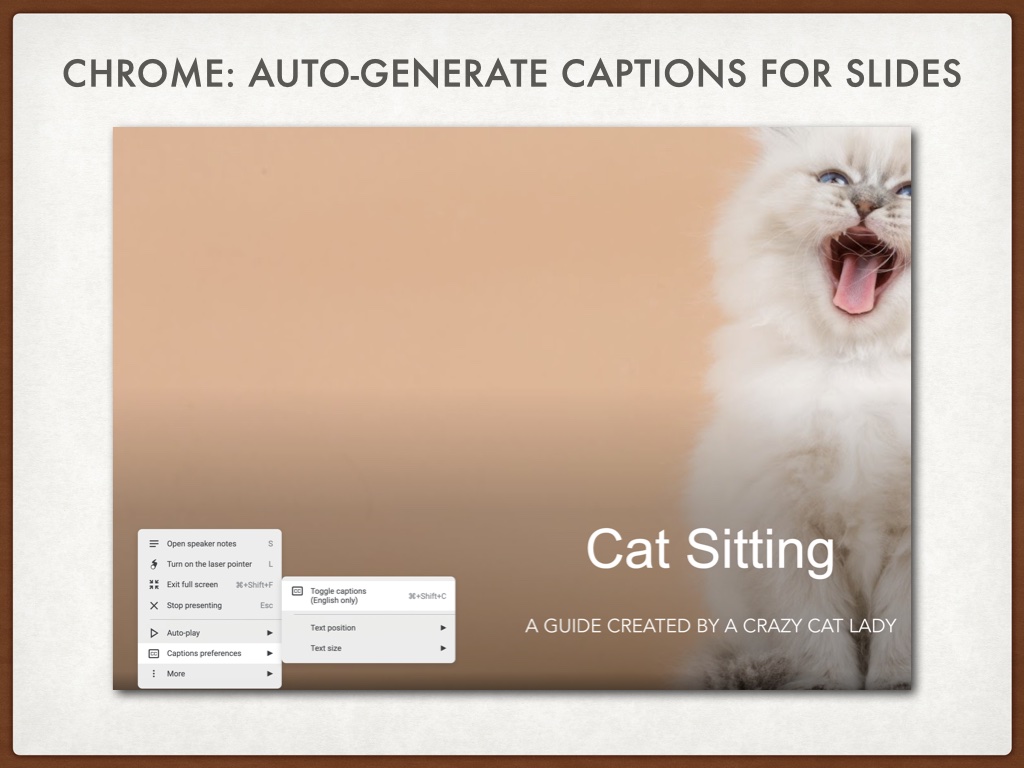

If you use Chrome there is a great feature which will allow you to provide captions as you deliver a Google Slides presentation. This is also using "live captioning" which makes it sound like "a live person might be captioning this" but really it's the same old AI. And if you screen record your presentation the captions will be right there - open captions. I'll be honest I've messed around with this a little and had a hard time getting it to work but friends of mine swear by it.

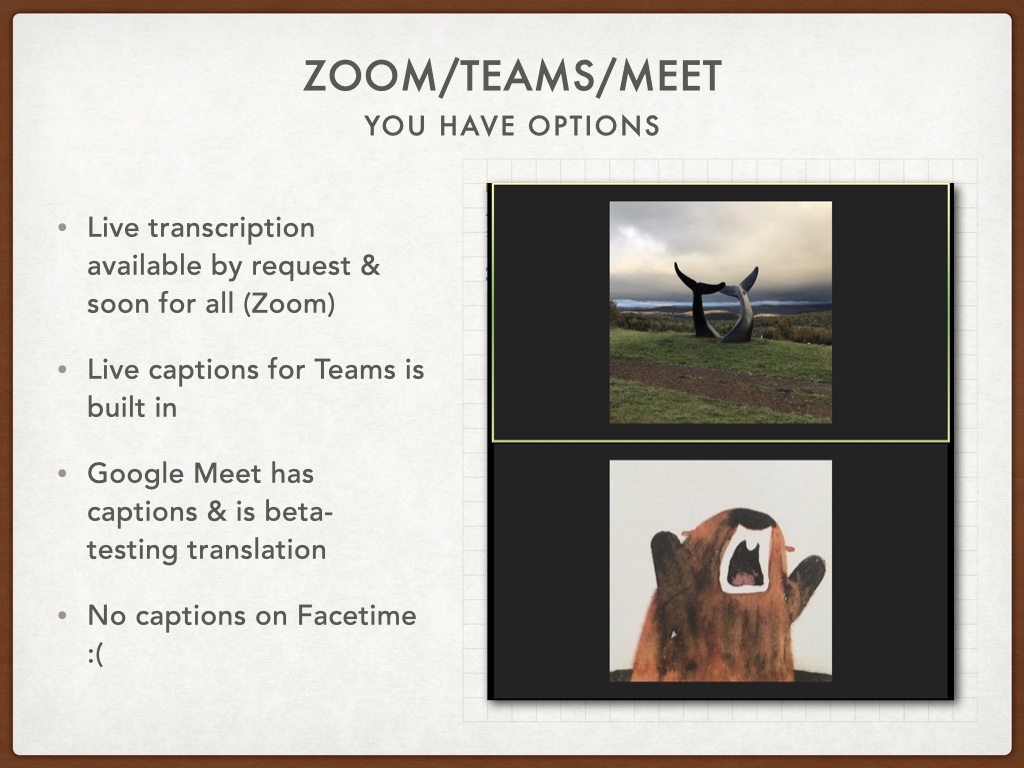

As far as events like this, there are options, most of them good. Zoom is aspiring to have live transcription (again, it's the robot) on all free and paid levels by "fall 2021" but any group can request this feature early and I suggest that you do. Teams has captions built in. Google Meet not only has captions but they're beta testing translated captions meaning I could be speaking and if my Spanish-speaking colleague was in the meeting, they might see my words translated into Spanish. I have not tried this. Facetime has nothing. Speaking of mobile-tools, many of the things--browser plug-ins and some web-based tools--I am talking about rely on having a desktop or laptop computer and they don't all work on mobile.

There are some great free captioning tools however, if you're not going the YouTube route. Amara is a great online tool where you can upload video and then have multiple people work on captions, you can even crowdsource captions for your videos like Scientific American does. It's free to use but if you want to keep your videos private and NOT have them available to the public, there's a fee-based version. WGBH, a locallish to me broadcaster has created an offline tool called CADET (Caption And Description Editing Tool) which is super powerful and straightforward to use. If you look at their videos on YouTube, ones that they've captioned, you can really tell the difference between user-generated captions and AI generated ones.

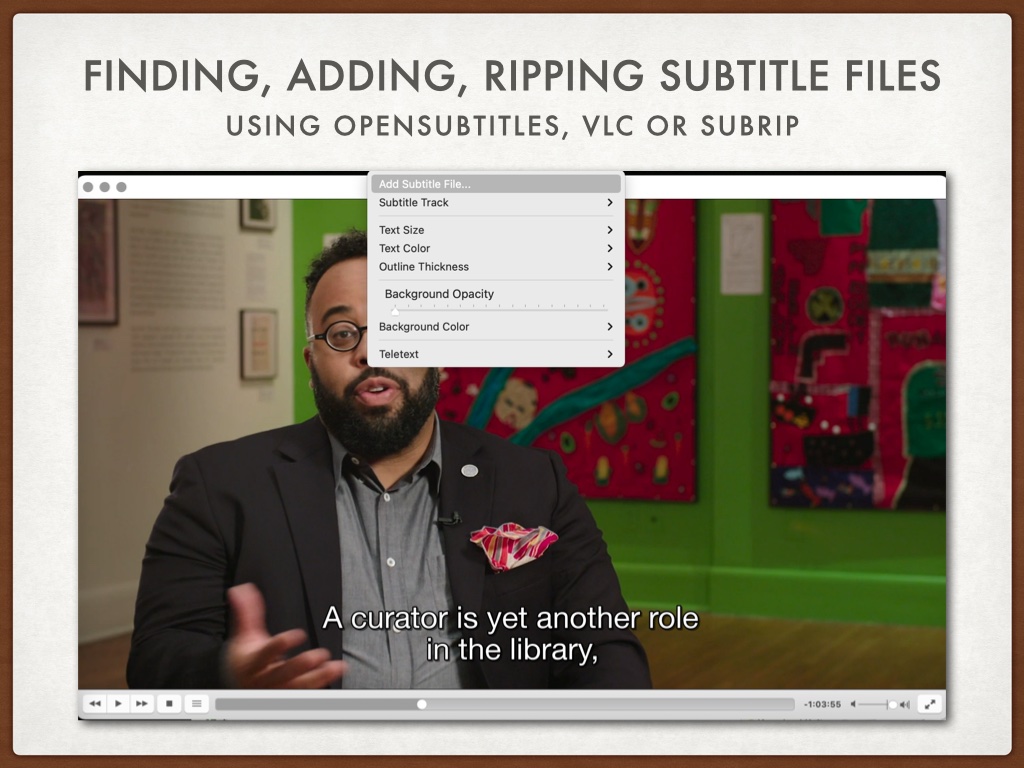

Or maybe you've got someone else's content and it doesn't have subtitles. .srt files are subtitle files that can be added to video content after the fact. You can add your own using the tools I've mentioned, or go find them on the open web using OpenSubtitles.com (or other sources - but that's a big one). There are HUGE fan communities that make sub files for things like English subs for anime originally in Japanese. Video players such as VLC will let you add a subtitle track to a video you've loaded. Here is a movie I just saw called The Booksellers. I found and added these subtitles (technically captions). You can also take content that has subtitles and rip the subtitles down to a text file to get an approximate transcript of video content. I do this a lot with CSPAN where maybe I want to know what happened but I don't want to watch a whole congressional hearing.

This is also true for older movies that may have been produced without subtitles originally.

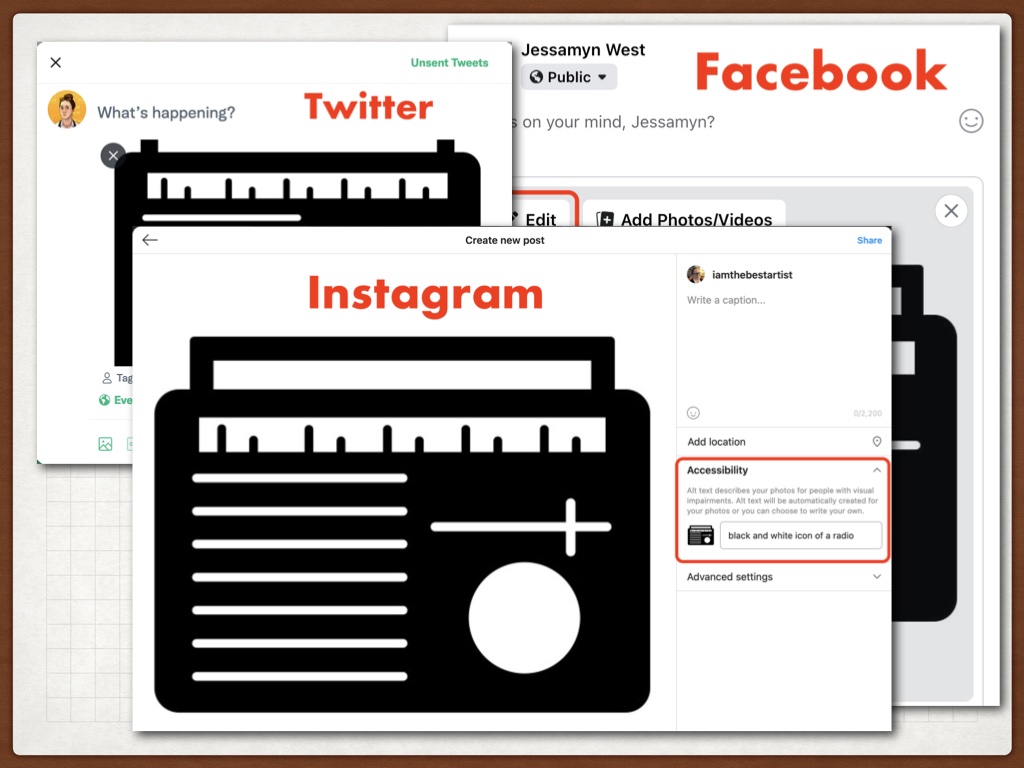

Social media is where I see people fail to use accessibility tools in place where I think it would benefit them the most and where the barrier to entry is incredibly low.

However, even though it's simple to type in alt text for an image, you have to FIND it first. Here is where to find a text entry box on three major social platforms. Two of these are even owned by the same company and they're still different! My links list has a few "how to" files which give advice on how to describe an image clearly and succinctly. Keep in mind that in an emotive space like social media your descriptions might be different from a more "just the facts" kind of space.

A few notes about alt text on twitter and other socials. You can't add it after the fact. It's illuminating to be able to see others' alt text, though this ability is not built in to any of the Twitter clients that I know of. You CAN use Greasemonkey (a browser add-on) and a plug-in for THAT (sounds technical but it's just clicking a few things to install which allows you to see who is using alt text (not enough people) and what they are saying. Here's an example where, when you mouse-over the image, you can see the user's alt text and their typo.

...

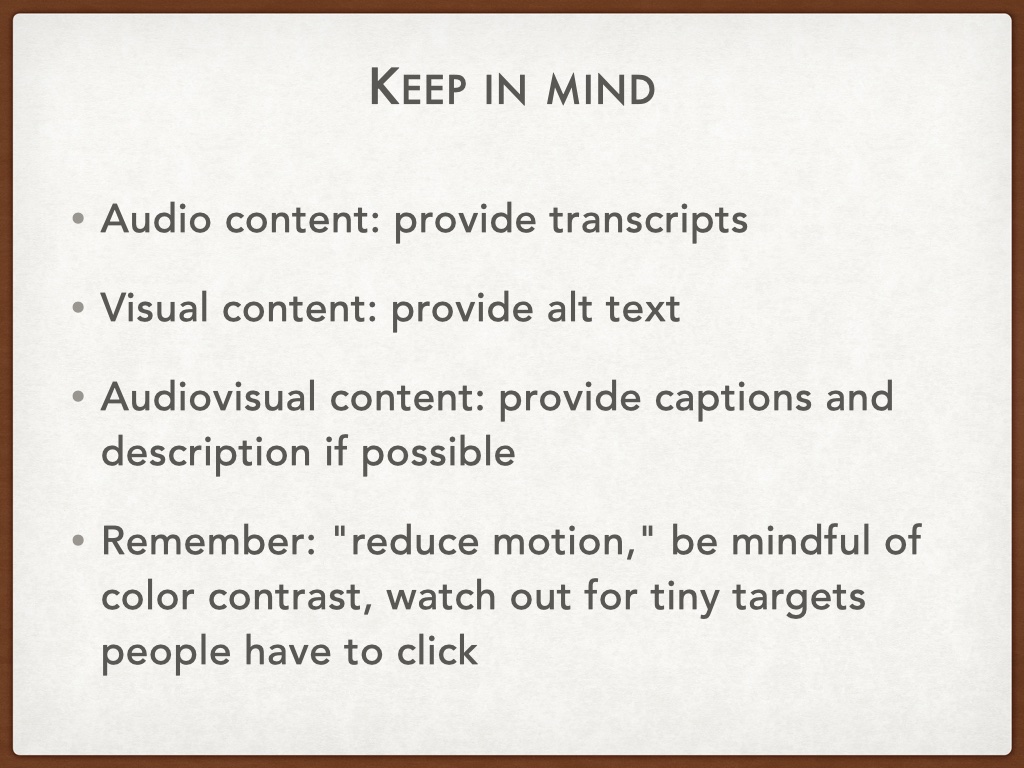

A few final thoughts about this.

As I mentioned before YouTube's automatic captions are okay-not-great, but now you know how you can make them better. And this is the general thrust of this talk "You can make this okay-not-great stuff better"

I was surprised when I started working on just bringing accessibility into my library and my own practice how few people prioritized it. To me it's kind of an obvious no-brainer for anyone working on inclusion topics. To that end, I encourage you to...

And thinking more along those lines, when we're thinking about accessibility....

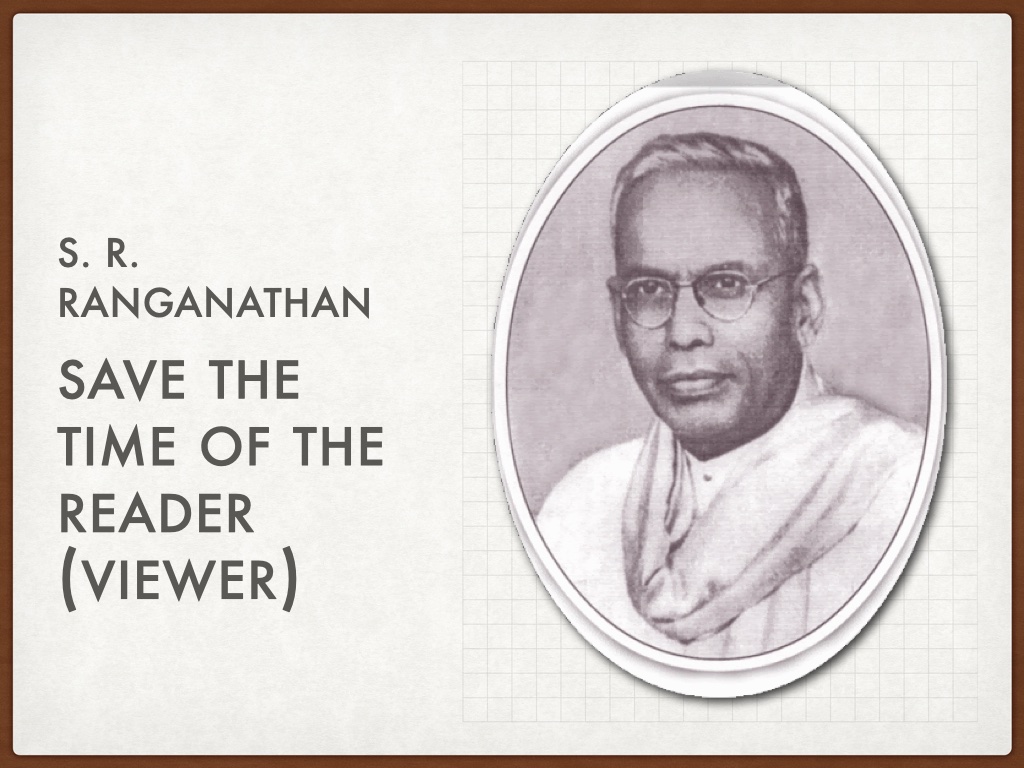

Obviously there are costs--time, money, effort--associated with this. However what we want to be doing is linking our users, patrons, viewers, potential customers, with content we want to share with them. Originally a statement addressing the open vs. closed stacks dilemma, this brief statement is an excellent summary of addressing accessibility needs. Ranganathan also said "The library is a growing organism" in that it should be changing and improving to meet the information needs of its service population. I thnk building accessibility into every part of our workflow is one great way to do this.

Appreciate your time and attention, please feel free to get in touch.